The Truth About LLM Workloads: Why One-Size-Fits-All APIs Are Costing You

We hold this truth to be self-evident: not all workloads are created equal.

But for large language models, this truth is far from universally acknowledged. Most organizations building LLM applications get their AI from an API. These APIs hide the varied costs and engineering trade-offs of distinct workloads behind deceptively simple per-token pricing.

However, the truth will out. The era of model API dominance is ending. This shift is thanks to excellent work on open source models by organizations like DeepSeek and Alibaba Qwen, which erode the benefits of proprietary model APIs. It’s also due to excellent work on open source inference engines like vLLM and SGLang, which erode the benefits of APIs powered by proprietary inference stacks.

Engineers who wish to take advantage of this technological change must understand their workloads in greater detail. This understanding is essential to properly architect and optimize their systems.

In this guide, we’ll walk through the workloads and requirements we’ve observed in the market while working with leading organizations deploying inference to production at scale. We’ll explain the challenges LLM engineers face when building for these workloads and how they solve those challenges.

The Three Kingdoms of LLM Workloads: Offline, Online, and Semi-Online

In the more mature world of databases, there is a well-known split between transaction processing (OLTP, like shopping carts) and analytical processing (OLAP, like annual reports). In between are hybrid workloads with characteristics of both.

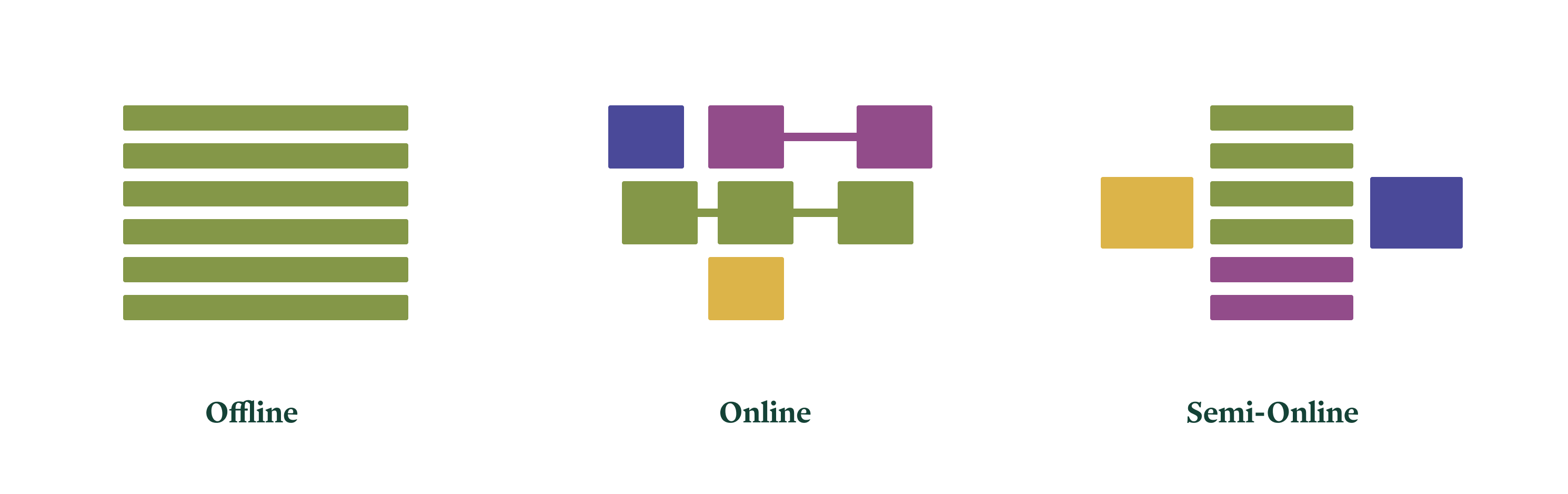

A similar three-part division helps us organize LLM workloads:

-

Offline or Analytical Workloads: These operate in batch mode, write to data stores asynchronously, and demand maximum throughput above all else. -

Online or Interactive Workloads: These operate in streaming mode, communicate synchronously with humans, and demand extremely low latency. -

Semi-Online or Bursty Workloads: These operate on streams of batches, communicate with other live computer systems, and demand flexible, scalable infrastructure.

Our core recommendations for each are:

-

For offline workloads, we recommend using vLLM via asynchronous RPC to auto-scaled compute capacity. -

For online workloads, we recommend using SGLang with ample tensor parallelism and EAGLE-3 speculative decoding on high-performance GPUs, accessed via low-overhead HTTP proxies. -

For semi-online workloads, we recommend using either engine with rapid autoscaling of compute capacity that can handle highly variable load.

We will unpack and justify these recommendations with reference to specific applications in the remainder of this document.

Offline Workloads: The Tyranny of Throughput

Consider these scenarios:

-

A data transformation service that uses an LLM to augment and update every row in a massive dataset. -

A leading video transcription company that needs to generate LLM summaries for thousands of recorded calls for later search and retrieval.

These systems are offline. They produce information for long-term storage in another computer system like a database or data warehouse. Workloads are submitted as bulk “jobs” composed of many LLM requests. The entire job should be completed quickly for cost reasons, but no single request requires an immediate, sub-second response. The scale of the job exposes substantial parallelism, which allows for significant economies of scale.

While generally easier to architect than interactive systems—after all, computers began as batch-processing machines—offline systems still present distinct challenges.

The Core Challenge: Maximizing Throughput Per Dollar

The fundamental challenge of offline, batch workloads is to maximize processing throughput while controlling cost by aggressively leveraging intra-batch task parallelism.

This is good news at a hardware level. The most popular hardware for LLM inference, GPUs, are architecturally designed for maximum throughput. Features like per-clock-cycle context switching, large matrix multiplication units (Tensor Cores), and a task-parallel programming model make it easier to write inference kernels that saturate the GPU’s compute resources. Furthermore, the training of LLMs is itself a massive offline, batch workload, which means new hardware often prioritizes these throughput characteristics.

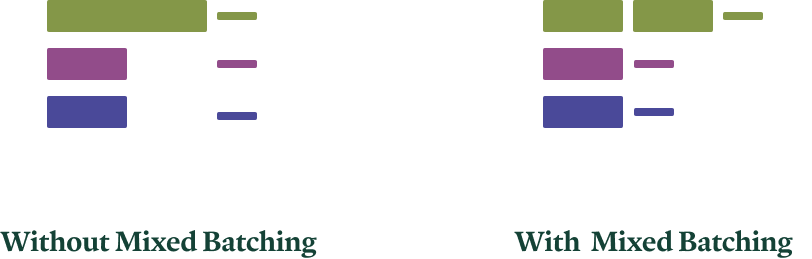

However, efficient kernels alone are not enough. To fully exploit parallelism, the system must intelligently schedule work. For instance, LLM inference for a single task splits into two phases: prefill (prompt processing) and decode (token generation). Prefill work can be further broken into chunks. With careful scheduling, all these types of work for different tasks can be executed together in the same batch.

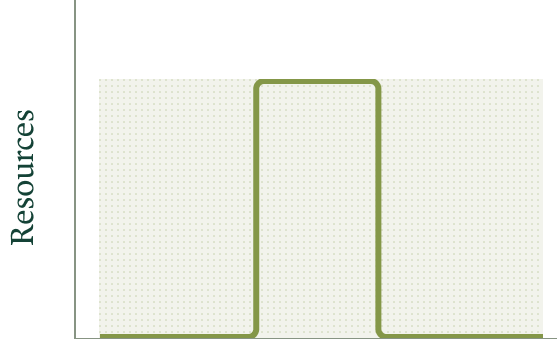

With mixed batching, less compute-intensive decode work (thin lines) can piggyback on more compute-intensive prefill work (thick lines), leading to better GPU utilization and faster overall job completion.

The vLLM inference engine has strong, built-in support for these advanced scheduling optimizations. For this reason, we currently recommend it for throughput-sensitive, offline workloads.

How To Implement for High Throughput

To optimize for throughput (and throughput per dollar) in offline applications, make the following architectural choices:

-

Use the Right Engine: Run on vLLM with its async scheduling and chunked prefill capabilities enabled. -

Batch Aggressively: Send large batches in each request to expose maximum parallelism to the engine. This is most straightforward using an offline-oriented interface like the LLMabstraction in vLLM’s Python SDK, rather than an online-serving HTTP API. -

Queue for Scale: Use asynchronous RPC patterns to queue up vast numbers of requests for parallel processing and later retrieval of results. -

Right-Size Your GPUs: Limit the number of GPUs per replica to the minimum required to run a batch large enough to saturate the GPU’s compute resources. Excess GPU capacity is better used to run more parallel replicas, not larger ones.

Frequently Asked Questions (FAQ)

Q: If it’s an offline job, why does speed matter? Can’t it just run slowly in the background?

A: Time is money, especially in the cloud. A job that processes 1 million records with double the throughput uses half the GPU hours, directly cutting your compute bill in half. At scale, this efficiency difference translates to massive operational savings.

Q: Can’t I just run open-source vLLM on my own servers?

A: You absolutely can. However, this requires you to manage the entire GPU cluster, handle job queuing, monitoring, and fault tolerance. A cloud platform’s value is in abstracting this complexity away, providing elastic, pay-as-you-go resources, and using multi-tenancy to smooth out costs so you pay for compute, not overhead.

Looking Ahead: The Future of Offline Work

As models become more reliable and their use more commonplace, we expect background batch workloads to become as ubiquitous and unremarkable as daily data analytics jobs are today—a fundamental piece of business infrastructure.

An interesting pattern in GPU economics supports this: the floating-point operations per dollar (FLOPs/$) remains roughly constant across generations. This means that for jobs prioritizing throughput per dollar over throughput per second, older, more available GPU models can be exceptionally cost-effective.

Online Workloads: The Relentless Pursuit of Low Latency

Consider these real-time scenarios:

-

A voice AI agent that needs to participate in a live phone call with a human customer, responding naturally within the flow of conversation. -

An AI-powered code editor that must serve “smart” autocompletion suggestions in the brief pause while a developer thinks about their next line.

These systems are online. A human user is actively interacting, expecting responses that match human reaction times—typically a few hundred milliseconds at most. Users create multi-turn conversations through repeated interactions.

Building online systems is extremely challenging. They are profoundly performance-sensitive. Performance requirements have a way of melting abstractions and forcing tight coupling of components that you might wish to keep separate. Yet, they can be built if you solve the attendant challenges.

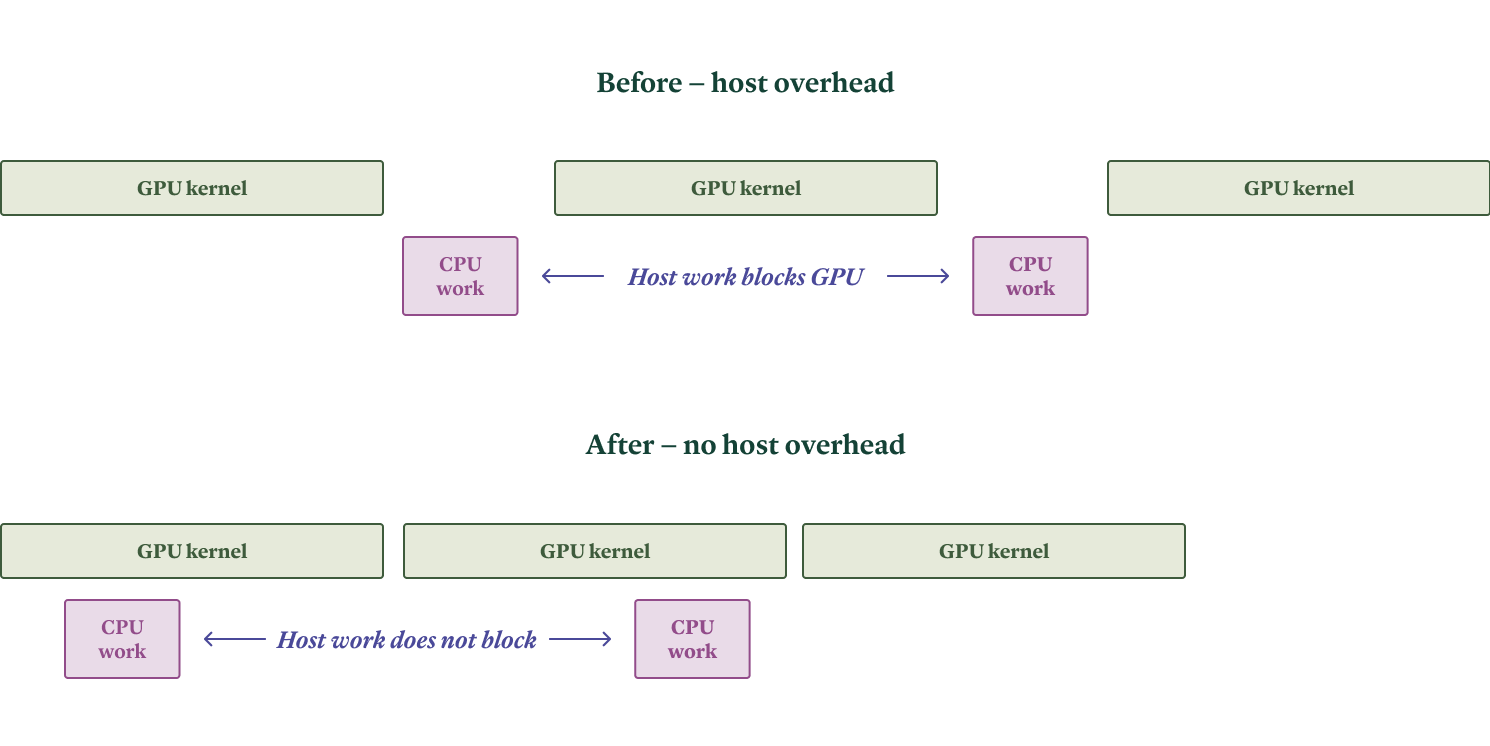

Challenge 1: Avoiding Host Overhead

The primary constraint is time: the system often has only a few hundred milliseconds to respond. This means performance penalties from using interpreted languages like Python start to matter critically. Since leading LLM inference engines are written mostly in Python for development speed, they must be carefully architected to prevent CPU-side work from blocking GPU work—a problem known as host overhead.

In our experience, the SGLang inference engine generally exhibits lower host overhead, making it a strong candidate for latency-sensitive applications.

Challenge 2: Reducing Communication Latency

Again, the clock is ticking: only a few hundred milliseconds to respond. For a cloud service (not a local app), network communication between the client and server can introduce significant latency at this scale. Even at the speed of light, a round trip from a user to a single, centralized data center can add tens to hundreds of milliseconds.

The solution is to deploy both routing proxies and GPU capacity to “the edge”—data centers geographically closer to your users. This can be challenging due to market availability of GPUs across all regions from all cloud providers. The solve is to aggregate capacity across multiple clouds to enable true regionalized deployments.

Challenge 3: Handling Multi-Turn Conversations Efficiently

Online workloads are interactive in their request patterns. A user responds to the AI, and the AI must respond in turn, maintaining context.

Efficient multi-turn LLM inference is inherently stateful, unlike stateless protocols like HTTP. While clients send the full conversation history, contemporary Transformer models have computation needs that scale quadratically with conversation length. This can be traded for linear computation by storing a linear amount of model activations, known as the key-value (KV) cache.

The solution is to route requests to specific LLM inference replicas based on the information needed to populate this cache—namely, the request’s conversational prefix. This “prefix-aware” routing can be as simple as sticky sessions per conversation or involve deeper inspection of the cache state.

Challenge 4: Hitting the Memory Wall

The bottleneck operations in LLM inference with KV caching have low arithmetic intensity. This means the process is memory-bound, not compute-bound.

Intuitively, generating one token per request requires loading billions of model parameters from memory into registers, only to use them for a handful of operations. This is a terrible mismatch for GPUs, which are designed for high arithmetic intensity. Furthermore, even with request batching, conversational prefixes are often distinct, forcing the loading of unique parts of the KV cache for each request.

The latency implications are severe. A single forward pass on a modern LLM, bound by memory bandwidth, takes several milliseconds. Autoregressive (sequential) generation stacks these latencies, quickly consuming a tight latency budget.

How do we fight the memory wall?

-

Increase Memory Bandwidth: -

Hardware: Use the latest GPU architectures (like H100, B200) which offer massive generational improvements in memory bandwidth. -

Software/Parallelism: Use multiple GPUs and employ tensor parallelism. This technique splits the work of large matrix multiplications across accelerators, aggregating their memory bandwidth. This requires a fast, low-latency interconnect like NVLink.

-

-

Reduce Memory Demand: -

Quantization: Reduce the precision of model weights, e.g., to 8-bit (FP8) or 4-bit (FP4). Modern hardware supports these formats natively, offering a smaller memory footprint and faster kernels with minimal quality loss for many models. -

Choose Efficient Architectures: Models with a Mixture of Experts (MoE) structure are designed to reduce active parameter count per forward pass, directly addressing memory bandwidth demands. When comparing models, look at active parameters, not just total parameters.

-

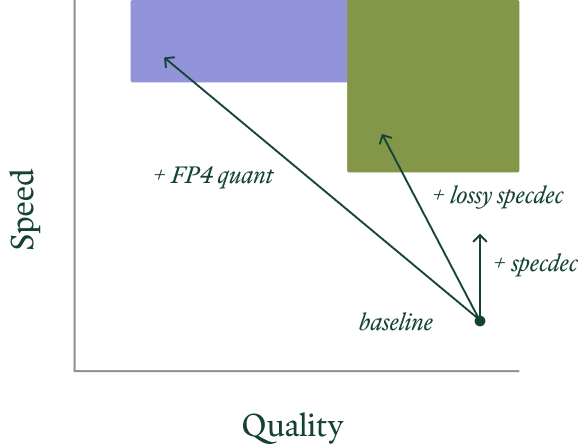

Challenge 5: “Cheating” the Speed of Light with Speculative Decoding

Eventually, hardware limits are inescapable. You hit the metaphorical “speed of light” for your chosen setup.

You can’t break the speed of light, but you can cheat it.

The key technique is speculative decoding. It leverages the slack in arithmetic bandwidth that exists in naive, single-token autoregressive inference.

A smaller, faster “speculator” model drafts several potential future tokens in sequence. The larger, target model then judges all these drafts in parallel in a single forward pass. Because inference is memory-bound, there are often spare FLOPs available to run the speculator. The system ensures output quality by accepting only drafts that meet a high probability threshold under the target model.

While the idea is established, recent advancements like the EAGLE-3 speculator training method have made it much more practical and effective, producing speculators with very high acceptance rates. SGLang has excellent support for speculative decoding, solidifying its position for low-latency serving.

How To Implement for Low Latency

To optimize online applications for the lowest possible latency, follow this blueprint:

-

Engine: Use SGLang to minimize host overhead and leverage speculative decoding. -

Quantization: Use FP8 quantization on H100/H200 GPUs for smaller memory footprint and faster kernels. -

Parallelism: Apply extra tensor parallelism beyond what’s needed for model capacity to further reduce memory read latency. -

Speculation: Integrate an EAGLE-3 speculator model. -

Deployment: Deploy using an ultra-low-overhead web server with session-based (prefix-aware) routing, placed in regions close to your users.

The Future of Online Serving

Due to massive investment in chatbots, this workload has seen intense engineering focus. Its trajectory is somewhat clearer.

We expect more “lossy” optimizations that trade minute amounts of quality for significant speed gains to become important. Examples include approximate KV caching, layer skipping, and model pruning. These techniques are already mature in other domains like image generation.

The right optimization strategy depends on your workload’s specific tolerance for latency versus required output quality.

Hardwise, the trend is toward tightly interconnected, rack-scale systems designed specifically for massive inference workloads. However, we believe the relative importance of pure online/chat workloads may decrease over time as the initial hype settles. The next “killer app” may well be long-running background agents (like AI coders or researchers) that have the patience of machines, not humans. This leads us to our final workload category.

Semi-Online Workloads: The Need for Elastic Flexibility

Imagine these use cases:

-

A document processing platform where a user might upload a single form for instant analysis or drop their entire company’s document archive for overnight processing. -

An AI news agency that needs to scale up hundreds of analysis agents within minutes in response to breaking news, but also produces a consolidated “daily digest” on a fixed schedule.

These systems are semi-online. They sometimes respond to a waiting human; other times they pass results to another system in a pipeline. Even when serving users directly, the interaction is less tightly coupled than a chat. Their defining characteristic is burstiness—load can spike to hundreds of times the baseline for minutes or tens of minutes.

The Core Challenge: Taming the Peak-to-Average Ratio

This high peak-to-average ratio creates a fundamental cost problem. System costs are typically driven by the resources needed to service peak demand, but the value or revenue generated is proportional to the average demand. If you provision for the peak, you waste money during long valleys of low usage.

Static systems must be provisioned for the peak (shaded area), but only collect value based on average usage (area under the curve). The gap represents inefficiency and cost.

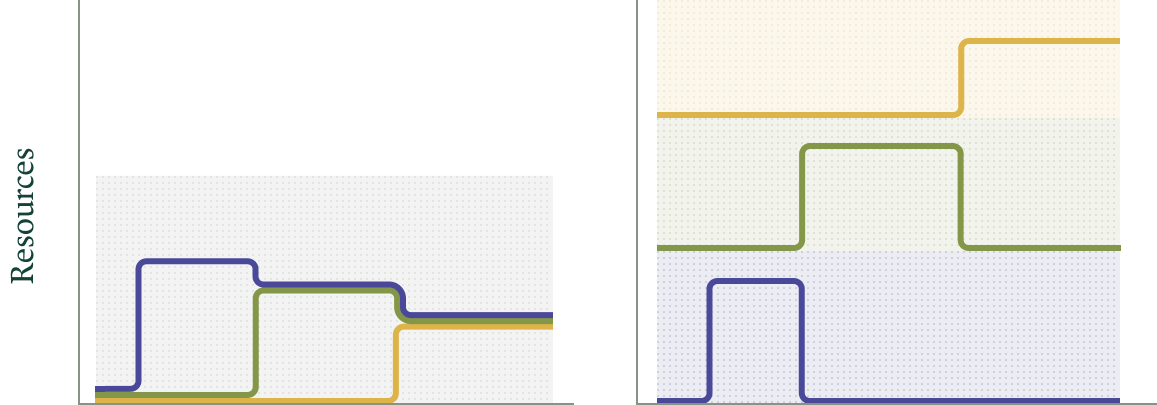

The proven solution is aggregation and multi-tenancy. By serving many different, uncorrelated workloads on shared hardware, their independent peaks and valleys average out into a much smoother aggregate demand curve. This dramatically lowers the effective peak-to-average ratio for the provider, who can then maintain a smaller buffer of spare capacity.

A multi-tenant system (left) smooths aggregate demand, reducing cost. A collection of single-tenant systems (right) incurs a cost close to the sum of their individual peaks.

The Critical Optimization: Slashing Cold Starts from Minutes to Seconds

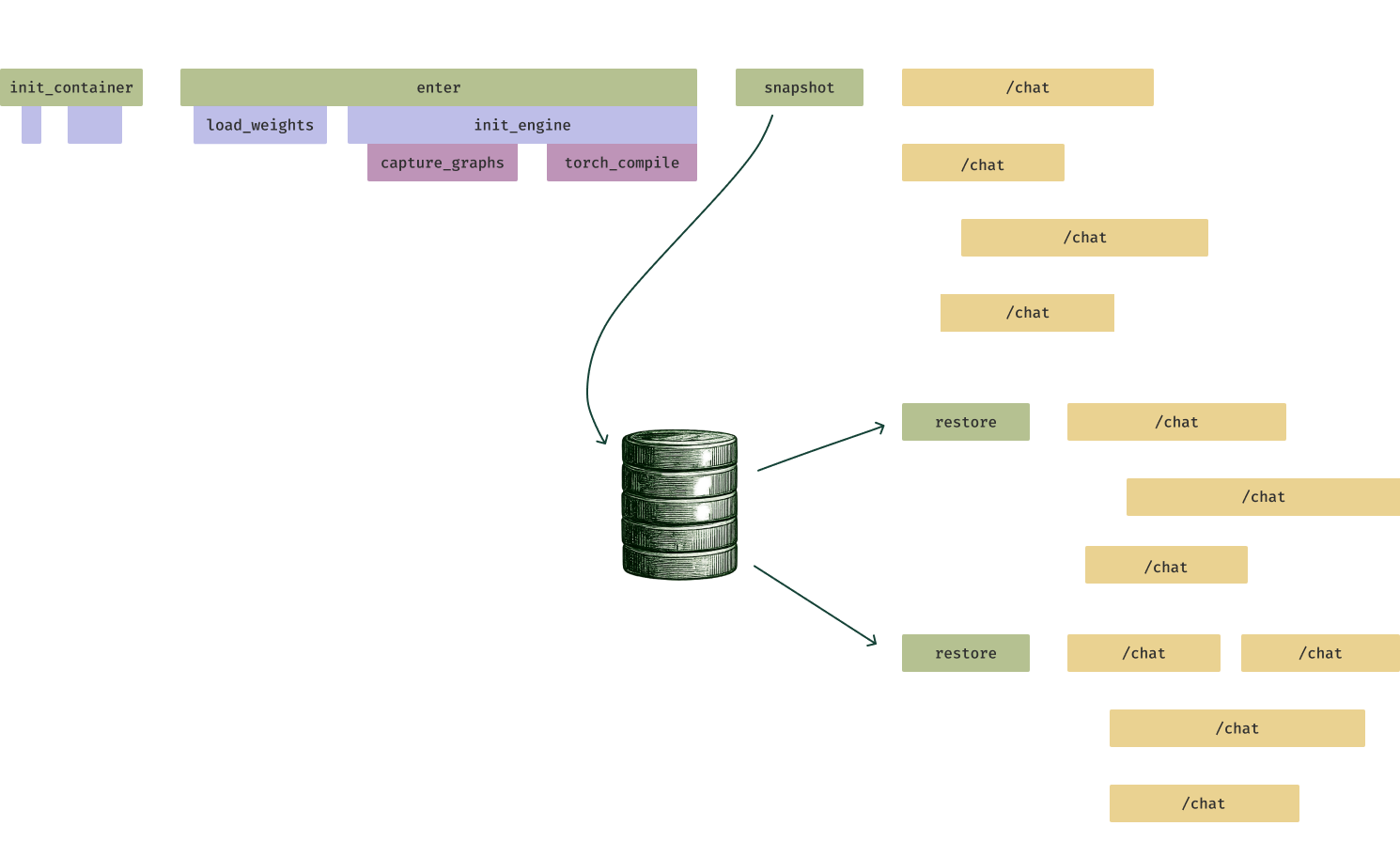

Multi-tenancy introduces a key challenge: cold start latency. Even if resources are available in a pool, configuring them to handle a request takes time—containers must boot, and the inference engine must initialize.

-

Slow Container Start: Large container images can take minutes to launch. Optimizations include mixing eager pre-fetching of essential files with lazy-loading of less critical ones. -

Slow Engine Initialization: Steps like Torch JIT compilation, which optimize inference, can themselves take minutes, during which the server is unusable.

Our solution is GPU Memory Snapshotting. Just before an inference server replica is ready to serve, we dump its entire in-memory state (model weights, compiled kernels, etc.) to a file. New replicas boot by loading this snapshot directly, bypassing all initialization and compilation steps. This converts many small I/O operations into one large, efficient read.

Memory snapshotting can cut LLM inference server start times from minutes to seconds by restoring from a serialized state, enabling rapid scale-up during traffic bursts.

How To Implement for Flexible Scaling

To build systems that can handle semi-online, bursty workloads economically:

-

Leverage Autoscaling: Use cloud infrastructure that provides fast-booting, auto-scaling GPU resources. -

Choose Available Hardware: While next-gen GPUs (B200) are scarce, current-gen H100/H200s are more available and excellent for FP8-quantized models. -

Deploy for the Web: Use a deployment decorator or setup that turns your Python inference code into a scalable web service with an OpenAI-compatible API. -

Tune Autoscaling: Configure your auto-scaling policy to absorb small bursts gracefully (e.g., by allowing a higher max_inputsthantarget_inputs). -

Pick Your Engine: Choose between vLLM and SGLang based on model compatibility and other needs. -

Use Snapshots: Implement GPU memory snapshotting to eliminate slow engine initialization, especially for engines that rely on JIT compilation.

Frequently Asked Questions (FAQ)

Q: My application has unpredictable, spiky traffic. Should I provision for the peak or the average?

A: You should not statically provision for either. Use a cloud-native service with robust, fast autoscaling. It will automatically add replicas when load rises and remove them when load falls, ensuring you pay for what you use, not what you might need.

Q: Do cold starts really impact user experience?

A: Absolutely. If a traffic burst arrives and new server replicas take 2-3 minutes to boot, your users during that period will experience severe latency or failures. Reducing cold starts to seconds is critical for maintaining responsiveness during scaling events.

The Future is Semi-Online

We expect semi-online applications to become increasingly prevalent as the field matures. The obvious first uses are pure offline analytics and pure online chat, but the vast middle ground—tasks combining patience, bursts, and interaction—is where much future innovation will lie.

Long-running autonomous agents exemplify this shift. They operate with machine patience, not human urgency, undertaking complex, multi-step tasks that may involve bursts of compute. We look forward to seeing more of these sophisticated workloads emerge and scale.

Conclusion: Taking Control of Your Inference Future

We are still in the early innings of the LLM engineering era, despite years of rapid progress in the models themselves. The initial advantage held by proprietary model providers and their integrated stacks is now being democratized by open models and open-source software.

As these foundational technologies commoditize, the additional benefits of customization, cost control, and optimization for your specific workload tilt the balance increasingly in favor of in-house inference.

This path requires more engineering effort and a commitment to learning. It also benefits from a strong community sharing knowledge, building skills, and contributing to open-source projects. The first step on this path is the one you’re taking now: understanding that not all workloads are created equal, and architecting your systems accordingly. Start by categorizing your task as offline, online, or semi-online, and choose your tools and strategies from there.